Voice AI agents are only as capable as the tools they can access during a live call. Every new tool your voice AI agent needs has historically meant a separate integration project: custom code, a new auth flow, another thing to maintain during live calls. Anthropic introduced MCP in November 2024 to replace that pattern with a single shared protocol, and by 2026, it had quietly become the backbone that everything plugs into—no friction, no fragmentation.

So how did MCP become the “USB-C port” for Voice AI agents? In this blog, we’ll break down the shift from messy, one-off integrations to a unified interface, and explore why MCP is redefining how voice systems scale.

MCP (Model Context Protocol) is an open standard that defines how AI agents discover, authenticate with, and communicate with external tools and data sources.

How it works: It operates over JSON-RPC 2.0, a lightweight request-response format most backend systems already support. Your agent connects once to the MCP layer, and from there, it can reach any tool that exposes an MCP server, with no new transport layer required.

Who governs it: In December 2025, Anthropic donated MCP to the Agentic AI Foundation (AAIF), a Linux Foundation project, co-founded by Anthropic, Block, and OpenAI, with AWS, Google, Microsoft, Bloomberg, and Cloudflare as platinum members. No single vendor controls the roadmap, so your integrations are not at risk from one company changing direction.

Adoption today: MCP has reached 97 million monthly SDK downloads and over 10,000 active public servers, with native support across Claude, ChatGPT, Gemini, Microsoft Copilot, and VS Code.

Before USB-C, every device shipped with its own cable. Before MCP, every voice AI integration required code written specifically for that connection.

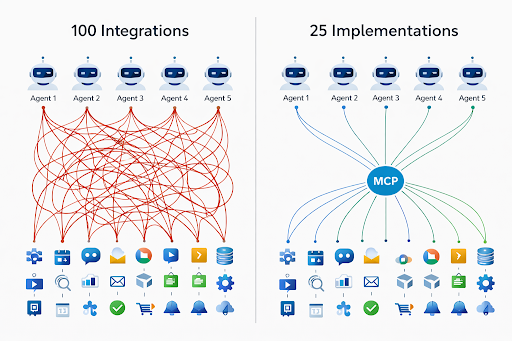

The problem it solves: This is the classic N×M integration problem. Five models connected to twenty tools could require up to 100 unique integrations. MCP reduces that to N + M: 5 agents plus 20 tools equals 25 implementations, not 100.

Why it matters for voice AI: A broken integration in most systems shows an error message. A broken integration during a live call means silence and a dropped customer. Fewer integration seams directly reduce that risk.

The N×M math explains the scale. These are the specific operational problems it creates.

Your agent may need to look up a record, check availability, confirm a payment, and send an SMS within a single call. Without a shared standard, each action requires its own implementation, auth flow, and error handling. Nothing is reusable across services.

Tool definitions written for one AI provider's format do not transfer when you switch providers or run two models side by side. Teams end up rebuilding identical logic for each model they adopt.

Each new tool added to your stack is a new integration project to scope, build, test, and maintain. With 20 tools across 5 agents, connector maintenance routinely crowds out new development.

API keys scattered across your agent runtime create audit exposure. In healthcare or finance, that shows up in compliance reviews, and remediation costs more than prevention.

Traditional connectors are stateless. Carrying context across multiple tool calls within one conversation requires custom middleware layered on top of already custom integrations.

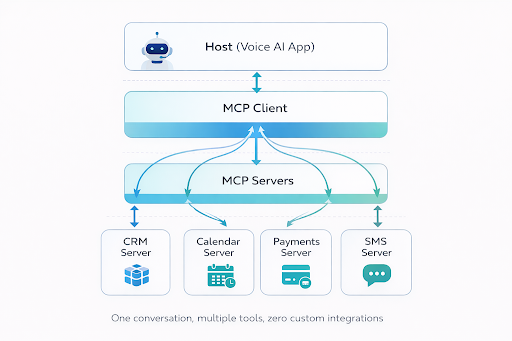

Your AI application: a custom voice agent, Claude Desktop, or any MCP-compatible system. The host is what your caller interacts with.

The protocol layer inside the host that manages server connections. One host can hold connections to multiple MCP servers simultaneously. It translates your agent's intent into MCP requests and routes responses back.

A standalone service that wraps one external tool or data source and exposes it through the standard interface. Once built, any MCP-compatible agent can use it without modification.

In practice: Your caller asks for their account balance. Your agent sends a request through the MCP client, which routes it to the CRM server, retrieves the record, and returns it mid-conversation. One round trip, no custom connector required for that specific data source.

The OpenAI Realtime API added native MCP support in August 2025. You pass your MCP server URL into the session configuration, and mid-conversation tool calls are handled automatically, with no additional integration layer between your voice stack and your tools.

Your caller asks about an order. Your agent identifies the order database through MCP at runtime, queries it, and reads back the tracking details. No pre-built connector, no hardcoded schema, no separate deployment required for that specific data source.

Your caller wants to reschedule. Your agent finds the calendar server, confirms available slots, and books the new time. A second MCP server sends an SMS confirmation. Two servers, one call, no human handoff.

A support call needs CRM history, insurance verification, and a new follow-up ticket. Three MCP servers handle each task within one conversation. Your agent discovers them at runtime rather than relying on preconfigured connections.

Your agent runs qualifying questions, scores the lead against your criteria, logs the result to your CRM, and routes high-value prospects to a sales rep before the call ends. The outcome is recorded without manual entry after the fact.

Current MCP deployments solve the integration problem. The roadmap addresses what comes after: agents that coordinate, share state, and operate across distributed systems in real time.

The MCP spec is being extended to support handoffs between agents. Your voice agent routes a billing query to a dedicated billing agent with the right system access, rather than managing that context itself. Each agent handles what it was built for.

Pre-built MCP servers for tools like Stripe, Salesforce, and HubSpot will be available to plug in rather than build from scratch. Over 10,000 public servers already exist. Standard integrations that previously took weeks are becoming hours.

Moving MCP servers closer to where your calls originate reduces round-trip latency. In voice conversations, delays of a few hundred milliseconds register as unnatural pauses. Edge deployment closes that gap at the infrastructure level.

The 2026 MCP roadmap centers on transport scalability, enterprise governance, and production hardening. The protocol is no longer being evaluated. It is being depended on.

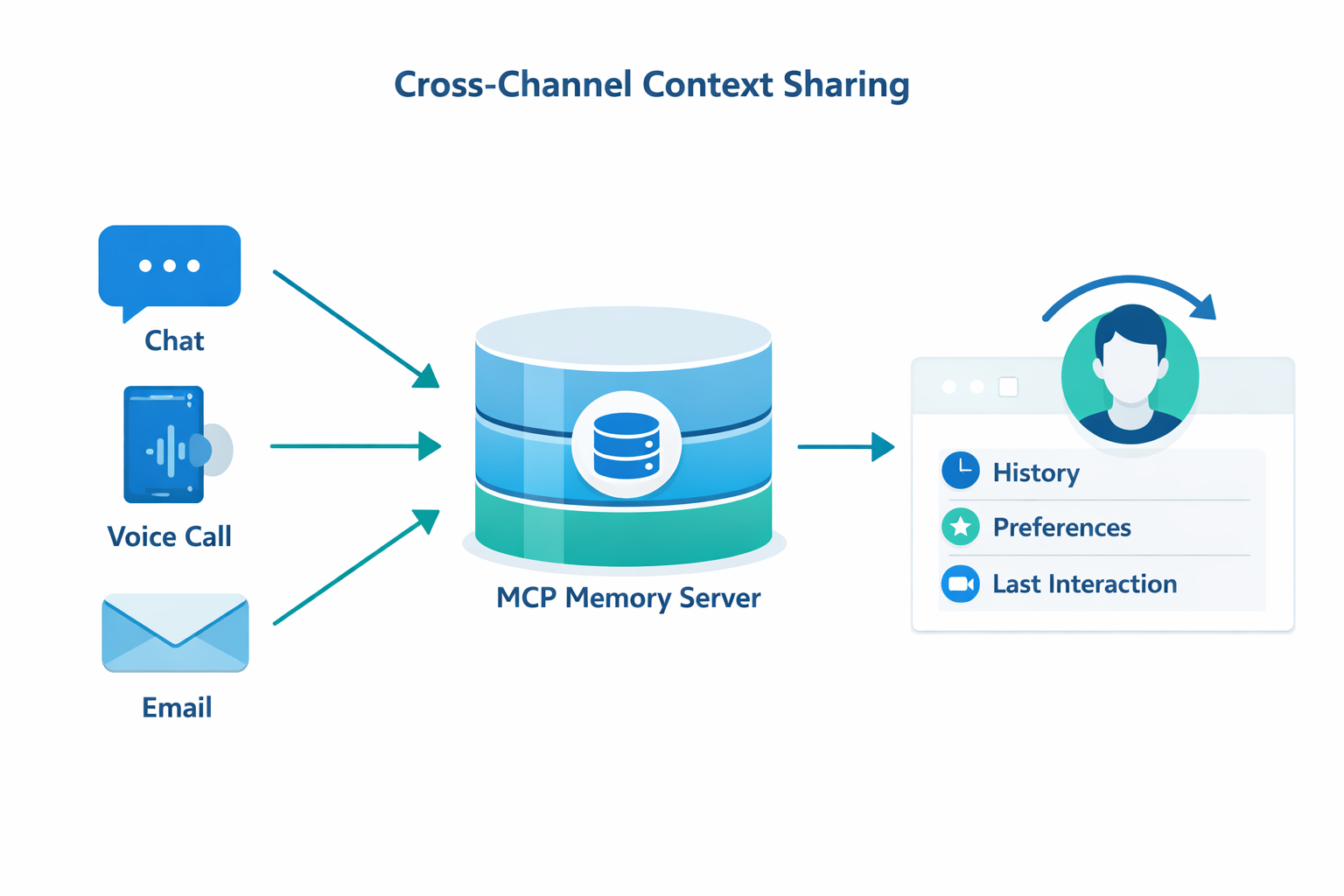

Most voice agents start every call with no memory of the caller. Your customer re-identifies themselves, re-explains their issue, and repeats information your system already holds somewhere else.

Through a persistent memory server, MCP changes that. When a repeat caller dials in, your agent loads their account status, last issue, and preferences before the first exchange. No repeated verification, no starting from scratch.

The same server feeds your voice agent, chat widget, and email automation simultaneously. When a customer shifts from chat to a phone call, your voice agent continues with full context from the prior session. Both channels read from the same source, so the handoff requires no custom sync job connecting two separate systems.

CRM data, purchase history, and interaction logs become usable context across every channel. Personalization at that level scales with your data, not with how many point-to-point integrations your team can sustain.

For most businesses, connecting a voice AI agent to the tools they already use is the actual deployment challenge, not the conversation quality.

How Goodcall handles it: Goodcall builds AI phone agents for healthcare, home services, retail, and other industries through an orchestration layer that connects to thousands of apps via Zapier. You configure the connection once. Your agent then looks up records, logs call outcomes, and triggers follow-up tasks through that same connection with no code required.

Results in production: Over 42,000 agents built on Goodcall have handled more than 4.7 million calls.

What that looks like in practice:

MCP did not just reduce integration work. It changed the compounding dynamic that made voice AI deployments increasingly expensive to maintain as they scaled.

Each MCP server you build today works across every agent you deploy tomorrow, regardless of which model powers them. The work does not repeat when your stack changes. That is what makes it infrastructure rather than tooling.

Ready to simplify your voice AI stack? Start building with Goodcall today and launch MCP-powered agents faster without repeating integration work.

What is MCP in AI?

MCP (Model Context Protocol) is an open standard that lets AI agents connect to external tools and workflows through one shared protocol. Anthropic introduced it in November 2024. It is now governed by the Linux Foundation, meaning no single company controls its direction.

Why is MCP compared to USB-C?

USB-C replaced dozens of proprietary cables with one port that works across every manufacturer. MCP replaces custom AI integrations with one protocol that works across models and tool providers. The structural problem being solved is identical.

How does MCP improve your voice AI agent?

Your agent discovers and calls tools during a live call without a pre-built connector for each one. It finds what it needs at runtime, calls the right MCP server, and gets the result back within the same conversation turn.

Is MCP only for developers?

No. Developers build and maintain MCP servers. You benefit from more capable agents without writing integration code. Platforms like Goodcall use MCP-compatible architectures, so you connect your tools through a configuration interface, not custom development.

What are MCP use cases in real life?

Customer service calls that pull live order data, appointment scheduling across calendar systems, support calls that need CRM history and ticket creation, and lead qualification with automatic CRM logging. All handled during the live call without human involvement.

Does MCP reduce AI integration costs?

Yes. Five agents connected to twenty tools without a standard can require up to 100 custom integrations. MCP reduces that to 25. One server per tool is shared across all your agents, cutting both build time and ongoing maintenance.

Is MCP the future of AI agent communication?

MCP is already the present for teams building serious voice AI. The roadmap extends it into agent-to-agent handoffs, distributed state, and edge deployment. With governance under the Linux Foundation and native support across every major AI provider, it is the protocol teams are building on, not evaluating.