Every missed call is a missed opportunity. The possibility of a forgotten note, an incorrect name, or a lead that never enters your system exists for every call taken at 6 PM on a Friday by a weary receptionist. The frustrating part? The technology to fix this has existed for years, but most companies still view it as a black box.

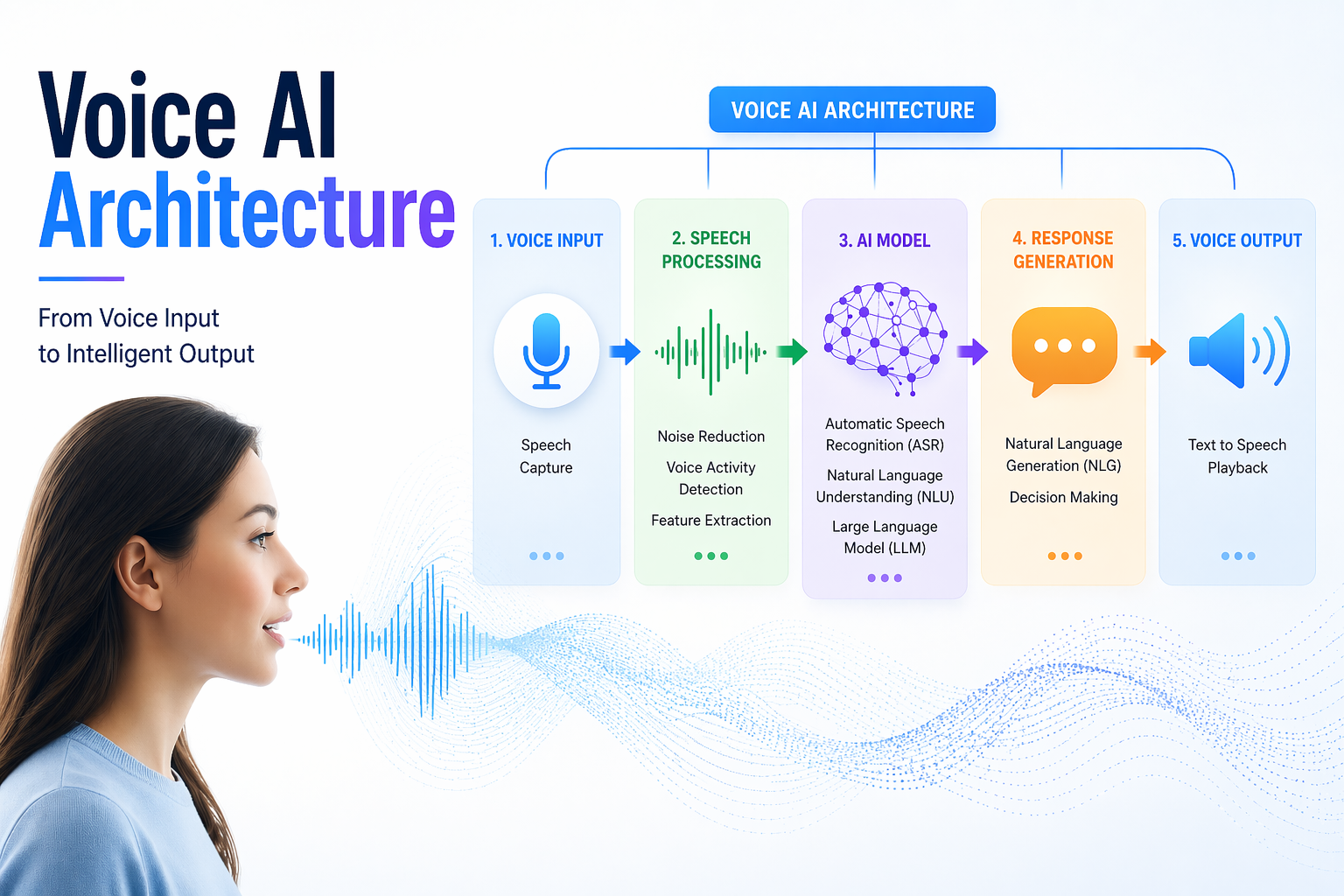

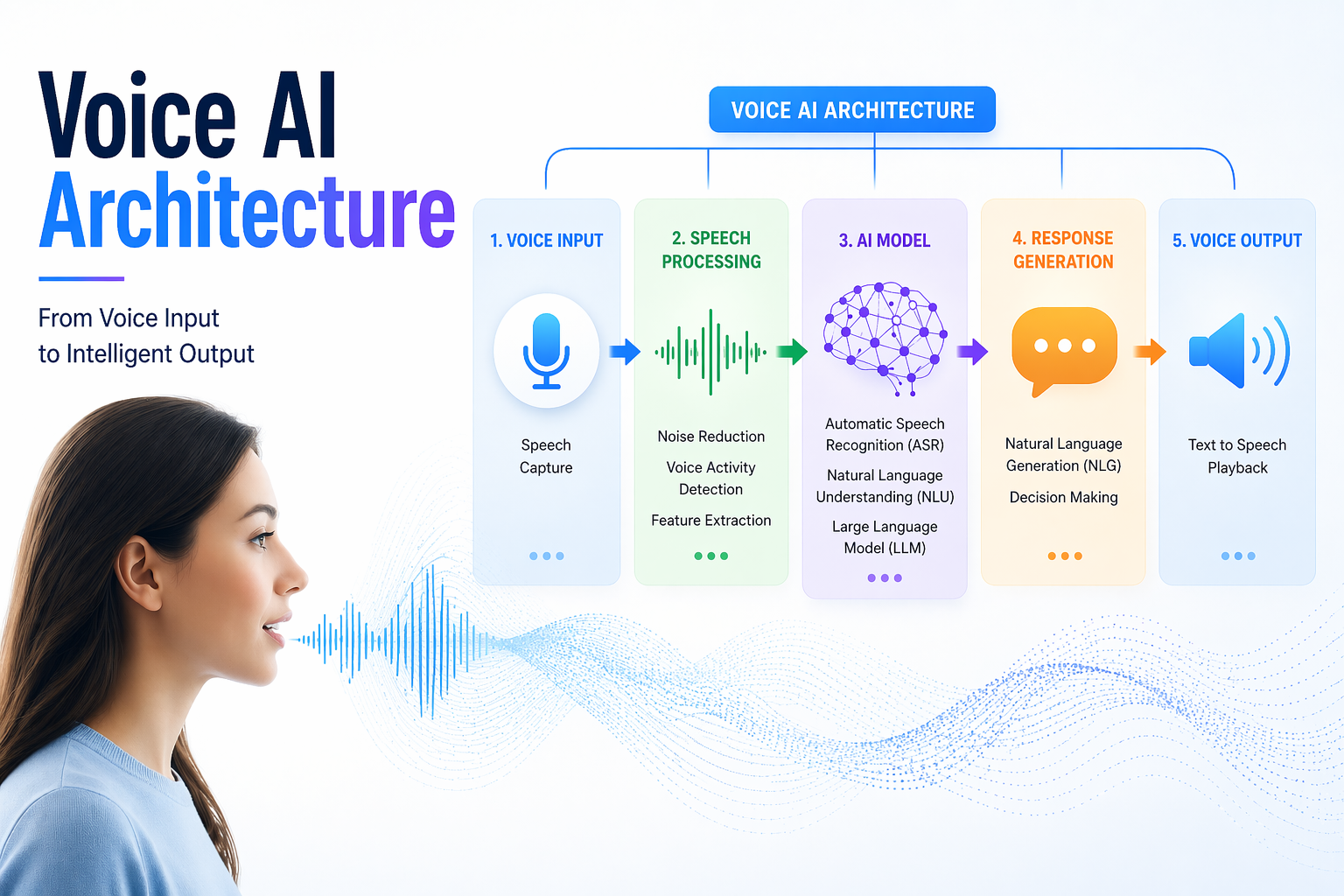

This is a breakdown of voice AI architecture, the system that takes a phone call, understands what the caller wants, and sends clean, structured data straight into your CRM. No manual entry. No dropped leads. No "I'll add it later."

Think of voice AI architecture as the nervous system of your phone lines. Similar to how the nervous system transmits signals from your fingertips to your brain and back, this architecture transmits a caller's speech from the time they start speaking.

Don't think of it as a single tool. It's actually a stack of specialized technologies that pass the baton to one another in milliseconds.

Core components at a glance:

Every component serves a distinct purpose. They don't operate independently. The weakest handoff between them determines how robust the architecture is.

Now that the components are on the table, the natural question is: what actually happens during a live call? Walk through the journey from the first ring to the final CRM entry.

Unlike traditional IVR systems that rely on rigid "press 1 for billing, press 2 for support" menus, modern conversational AI architecture uses NLU to understand intent in natural speech, the way a person would actually say it.

Here is how voice AI works, step by step:

1. The Call Arrives: The caller dials in. The telephony layer receives the audio stream and immediately forwards it to the ASR engine in real time without buffering.

2. Audio Becomes Text: The ASR engine listens to the audio and converts it into a text transcript. The engine is trained to handle accents, background noise, and fast speech.

3. Text Becomes Intent: The NLU model reads the transcript and identifies what the caller actually wants. A caller saying "I need to move my appointment to Thursday" is not just producing words. The NLU pulls out intent (reschedule), entity (appointment), and time reference (Thursday) from the speech.

4. The Dialogue Manager Takes Over: Based on the intent, the dialogue manager decides what happens next. Does the system ask a clarifying question? Confirm the action? Transfer to a human agent? This is the decision-making layer.

5. The LLM Shapes the Response: For complex conversations, the LLM generates a natural-sounding reply that fits the context. This is what separates a rigid script from a fluid, human-like conversation.

6. The Response Is Spoken: The TTS engine converts the generated response into speech and delivers it back to the caller. The entire loop from hearing to responding typically happens in under one second in a well-built system.

7. Data Hits the CRM: Once the call concludes, or even mid-call, depending on the workflow, the orchestration engine formats the captured data and pushes it to the CRM via API. The caller's name, request, phone number, and any other captured fields are logged automatically.

With the architecture mapped out, performance becomes the next honest conversation. There is a real tension at the heart of every voice system: speed versus intelligence.

When you compare latency and accuracy, it becomes clear that sub-second response times are non-negotiable for customer satisfaction. A caller who hears a pause longer than 1.5 seconds after speaking will assume the call has dropped. They either repeat themselves or hang up. Both outcomes break the experience.

But accuracy and processing depth require computing time. A more capable LLM that understands complex multi-part requests takes longer to respond than a lightweight model running a limited script. This is the real-time voice AI processing challenge that every architecture team faces.

The trade-offs break down like this:

The smartest systems don’t use a "one-size-fits-all" approach. Instead, they act like a smart switchboard that routes tasks based on how hard they are:

This "brain" that decides where each task goes is called the orchestration engine. It ensures you aren't wasting a "super-brain" on a task a basic calculator could handle.

Performance depends on what specific technologies power each layer. Forget the jargon for a second. Let’s look at this through three simple buckets: Hearing, Thinking, and Speaking.

These buckets describe what the technology does, not just what it is called.

Hearing: The ASR Layer

This is the speech-to-text AI pipeline that converts audio into usable text. Leading ASR engines include Google's Speech-to-Text, AWS Transcribe, and Deepgram. What separates a strong ASR layer from a weak one is how it handles noise, accents, and domain-specific vocabulary, such as medical terms, legal phrases, brand names, and product SKUs.

Thinking: NLU and LLM

Once the words are captured, a thinking layer takes over and performs two key roles:

By combining these two, the system stops feeling like a rigid "Press 1 for Support" bot and starts feeling like a real representative who actually understands you.

Speaking: The TTS Engine

Text-to-Speech engines have improved dramatically. Modern TTS solutions produce speech that is nearly indistinguishable from a human voice in controlled conditions. The choice of the TTS engine directly affects caller trust. A robotic voice causes people to disengage. A natural voice keeps them on the line.

Having established what the architecture is made of and how it performs, the more grounded question is: where does this actually get deployed, and what kind of return do businesses see?

Here are the key industries accounting for the highest-ROI deployments of AI call handling systems today:

1. Dental and Medical Practices (Scheduling and Reminders)

Medical front desks are overwhelmed. Staff spend hours each week managing appointment calls, confirming visits, and relaying information that a voice system can handle at scale. Implementing an AI Voice Agent for Appointment Booking allows medical and service-based businesses to fill their calendars without human intervention, even after office hours.

2. Home Services (Lead Capture)

A plumber or HVAC company running three technicians in the field cannot always answer a call at 2 PM on a Tuesday. Before the technician completes the present task, voice AI formats and stores the caller's name, address, problem description, and chosen time window in the CRM.

3. E-commerce and Retail (Order Tracking and Returns)

Customers calling to track orders or initiate returns are asking repetitive, structured questions. An AI call handling system handles these calls end-to-end with no human involvement, freeing support staff to handle escalations and complex cases that actually require judgment.

Voice AI transforms raw conversations into structured, actionable CRM data in real time, eliminating manual effort and delays. Here’s how this automation delivers measurable advantages across operations and customer experience:

Credibility comes from acknowledging where Voice AI can fall short: Let’s look at some key limitations to address:

Language models can generate confident but incorrect responses, such as confirming unavailable slots or incorrect pricing. Guardrails like CRM verification and fallback logic are essential to minimize risk.

Background noise, accents, or weak signals can reduce transcription accuracy and impact downstream processing. While noise cancellation helps, challenging environments still affect performance.

Issues like unnatural pauses, mispronunciations, or monotone delivery can make interactions feel artificial. Customizing voice tone and persona is critical to maintain user trust.

Business owners often ask, "Is Voice AI safe?" Voice AI systems must adhere to regulations, such as HIPAA, PCI, and regional consent laws. Failing to embed compliance into architecture can expose businesses to legal and financial risks.

Goodcall streamlines the entire Voice AI architecture by seamlessly capturing, transcribing, and structuring call data into CRM-ready formats in real time. It integrates directly with business systems to automate workflows like lead creation, ticketing, and follow-ups without manual intervention. This end-to-end automation ensures faster operations, higher data accuracy, and a scalable foundation for customer communication.

How Goodcall Powers End-to-End Voice AI Automation:

Before wrapping up, here is the practical starting point for any business considering a deployment. Use this as a readiness audit before approaching any vendor conversation.

The businesses that see the fastest ROI from voice AI are the ones that did the groundwork first. A well-scoped deployment that handles 80% of call types cleanly is far more valuable than an ambitious one that handles 100% of call types poorly.

What is voice AI architecture?

Telephony, ASR, NLU, LLM, TTS, and CRM APIs are among the layered technologies that collaborate to receive a spoken call, comprehend it, answer it, and automatically record the result.

How long does it take to implement voice AI?

A platform like Goodcall can be configured and live within days for most businesses. Custom enterprise deployments with complex integrations can take several weeks.

Can voice AI handle calls in multiple languages?

Yes, depending on the ASR and NLU models selected. English is universally supported. Spanish, French, and other languages are increasingly available across leading platforms.

What happens when the voice AI cannot understand a caller?

A well-configured system has a fallback protocol that transfers the caller to a human agent after a set number of failed intents. No system should leave a caller stuck in a loop.

Is voice AI only for large businesses?

No. Given the largest ratio of unanswered calls to available staff, small service firms, such as dentist offices, plumbers, and hairdressers, frequently see the best ROI.

Does voice AI replace receptionists?

It handles repetitive, structured calls so that human staff can focus on complex interactions, escalations, and relationship-building. It augments the team rather than replaces it.

How secure is the data captured by voice AI?

Security depends entirely on the provider. Look for HIPAA and PCI compliance, encrypted storage, role-based access controls, and transparent data retention policies before signing with any vendor.